Learning SQL Exercise

I’ve been using Alan Beaulieu’s Learning SQL to teach my SQL Development class with MySQL 8. It’s a great book overall but Chapter 12 lacks a complete exercise. Here’s all that the author provides to the reader. This is inadequate for most readers to work with to solve the concept of a transaction.

Exercise 12-1

Generate a unit of work to transfer $50 from account 123 to account 789. You will need to insert two rows into the transaction table and update two rows in the account table. Use the following table definitions/data:

Account:

account_id avail_balance last_activity_date

----------- -------------- ------------------

123 450 2019-07-10 20:53:27

789 125 2019-06-22 15:18:35

Transaction:

txn_id txn_date account_id txn_type_cd amount

------ ---------- -------+-- ----------- ------

1001 2019-05-15 123 C 500

1002 2019-06-01 789 C 75 |

Use txn_type_cd = ‘C” to indicate a credit (addition), and use txn_type_cd = ‘D’ to indicate a debit (substraction).

New Exercise 12-1

The problem with the exercise description is that the sakila database, which is used for most of the book, doesn’t have transaction or account tables. Nor, are there any instructions about general accounting practices or principles. These missing components make it hard for students to understand how to build the transaction.

The first thing the exercise’s problem defintion should qualify is how to create the account and transaction tables, like:

- Create the account table, like this with an initial auto incrementing value of 1001:

-- +--------------------+--------------+------+-----+---------+----------------+ -- | Field | Type | Null | Key | Default | Extra | -- +--------------------+--------------+------+-----+---------+----------------+ -- | account_id | int unsigned | NO | PRI | NULL | auto_increment | -- | avail_balance | double | NO | | NULL | | -- | last_activity_date | datetime | NO | | NULL | | -- +--------------------+--------------+------+-----+---------+----------------+

- Create the account table, like this with an initial auto incrementing value of 1001:

-- +----------------+--------------+------+-----+---------+----------------+ -- | Field | Type | Null | Key | Default | Extra | -- +----------------+--------------+------+-----+---------+----------------+ -- | txn_id | int unsigned | NO | PRI | NULL | auto_increment | -- | txn_date | datetime | YES | | NULL | | -- | account_id | int unsigned | YES | | NULL | | -- | txn_type_cd | varchar(1) | NO | | NULL | | -- | amount | double | YES | | NULL | | -- +----------------+--------------+------+-----+---------+----------------+

Checking accounts are liabilities to banks, which means you credit a liability account to increase its value and debit a liability to decrease its value. You should insert the initial rows into the account table with a zero avail_balance. Then, make these iniitial deposits:

- Credit transaction table with an account_id column value of 123 with $500 and a txn_type_cd column value of ‘C’.

- Credit transaction table with an account_id column value of 789 with $75 and a txn_type_cd column value of ‘C’.

Write an update statement to set the avail_balance column values equal to the aggregate sum of the transaction table’s rows, which treats credit transacctions (those with a ‘C’ in the txn_type_cd column as a positive number and thos with a ‘D’ in the txn_type_cd column as a negative number).

Generate a unit of work to transfer $50 from account 123 to account 789. You will need to insert two rows into the transaction table and update two rows in the account table. Use the following table definitions/data:

- Debit transaction table with an account_id column value of 123 with $50 and a txn_type_cd column value of ‘D’.

- Credit transaction table with an account_id column value of 789 with $50 and a txn_type_cd column value of ‘C’.

Apply the prior update statement to set the avail_balance column values equal to the aggregate sum of the transaction table’s rows, which treats credit transacctions (those with a ‘C’ in the txn_type_cd column as a positive number and thos with a ‘D’ in the txn_type_cd column as a negative number).

Here’s the solution to the problem:

-- +--------------------+--------------+------+-----+---------+----------------+ -- | Field | Type | Null | Key | Default | Extra | -- +--------------------+--------------+------+-----+---------+----------------+ -- | account_id | int unsigned | NO | PRI | NULL | auto_increment | -- | avail_balance | double | NO | | NULL | | -- | last_activity_date | datetime | NO | | NULL | | -- +--------------------+--------------+------+-----+---------+----------------+ DROP TABLE IF EXISTS account, transaction; CREATE TABLE account ( account_id int unsigned PRIMARY KEY AUTO_INCREMENT , avail_balance double NOT NULL , last_activity_date datetime NOT NULL ) ENGINE=InnoDB AUTO_INCREMENT=1001 DEFAULT CHARSET=utf8mb4 COLLATE=utf8mb4_0900_ai_ci; -- +----------------+--------------+------+-----+---------+----------------+ -- | Field | Type | Null | Key | Default | Extra | -- +----------------+--------------+------+-----+---------+----------------+ -- | txn_id | int unsigned | NO | PRI | NULL | auto_increment | -- | txn_date | datetime | YES | | NULL | | -- | account_id | int unsigned | YES | | NULL | | -- | txn_type_cd | varchar(1) | NO | | NULL | | -- | amount | double | YES | | NULL | | -- +----------------+--------------+------+-----+---------+----------------+ CREATE TABLE transaction ( txn_id int unsigned PRIMARY KEY AUTO_INCREMENT , txn_date datetime NOT NULL , account_id int unsigned NOT NULL , txn_type_cd varchar(1) , amount double , CONSTRAINT transaction_fk1 FOREIGN KEY (account_id) REFERENCES account(account_id)) ENGINE=InnoDB AUTO_INCREMENT=1001 DEFAULT CHARSET=utf8mb4 COLLATE=utf8mb4_0900_ai_ci; -- Insert initial accounts. INSERT INTO account ( account_id , avail_balance , last_activity_date ) VALUES ( 123 , 0 ,'2019-07-10 20:53:27'); INSERT INTO account ( account_id , avail_balance , last_activity_date ) VALUES ( 789 , 0 ,'2019-06-22 15:18:35'); -- Insert initial deposits. INSERT INTO transaction ( txn_date , account_id , txn_type_cd , amount ) VALUES ( CAST(NOW() AS DATE) , 123 ,'C' , 500 ); INSERT INTO transaction ( txn_date , account_id , txn_type_cd , amount ) VALUES ( CAST(NOW() AS DATE) , 789 ,'C' , 75 ); UPDATE account a SET a.avail_balance = (SELECT SUM( CASE WHEN t.txn_type_cd = 'C' THEN amount WHEN t.txn_type_cd = 'D' THEN amount * -1 END) AS amount FROM transaction t WHERE t.account_id = a.account_id AND t.account_id IN (123,789) GROUP BY t.account_id); SELECT * FROM account; SELECT * FROM transaction; -- Insert initial deposits. INSERT INTO transaction ( txn_date , account_id , txn_type_cd , amount ) VALUES ( CAST(NOW() AS DATE) , 123 ,'D' , 50 ); INSERT INTO transaction ( txn_date , account_id , txn_type_cd , amount ) VALUES ( CAST(NOW() AS DATE) , 789 ,'C' , 50 ); UPDATE account a SET a.avail_balance = (SELECT SUM( CASE WHEN t.txn_type_cd = 'C' THEN amount WHEN t.txn_type_cd = 'D' THEN amount * -1 END) AS amount FROM transaction t WHERE t.account_id = a.account_id AND t.account_id IN (123,789) GROUP BY t.account_id); SELECT * FROM account; SELECT * FROM transaction; |

The results are:

+------------+---------------+---------------------+ | account_id | avail_balance | last_activity_date | +------------+---------------+---------------------+ | 123 | 450 | 2019-07-10 20:53:27 | | 789 | 125 | 2019-06-22 15:18:35 | +------------+---------------+---------------------+ 2 rows in set (0.00 sec) +--------+---------------------+------------+-------------+--------+ | txn_id | txn_date | account_id | txn_type_cd | amount | +--------+---------------------+------------+-------------+--------+ | 1001 | 2024-04-01 00:00:00 | 123 | C | 500 | | 1002 | 2024-04-01 00:00:00 | 789 | C | 75 | | 1003 | 2024-04-01 00:00:00 | 123 | D | 50 | | 1004 | 2024-04-01 00:00:00 | 789 | C | 50 | +--------+---------------------+------------+-------------+--------+ 4 rows in set (0.00 sec) |

As always, I hope this helps those trying to understand how CTEs can solve problems that would otherwise be coded in external imperative languages like Python.

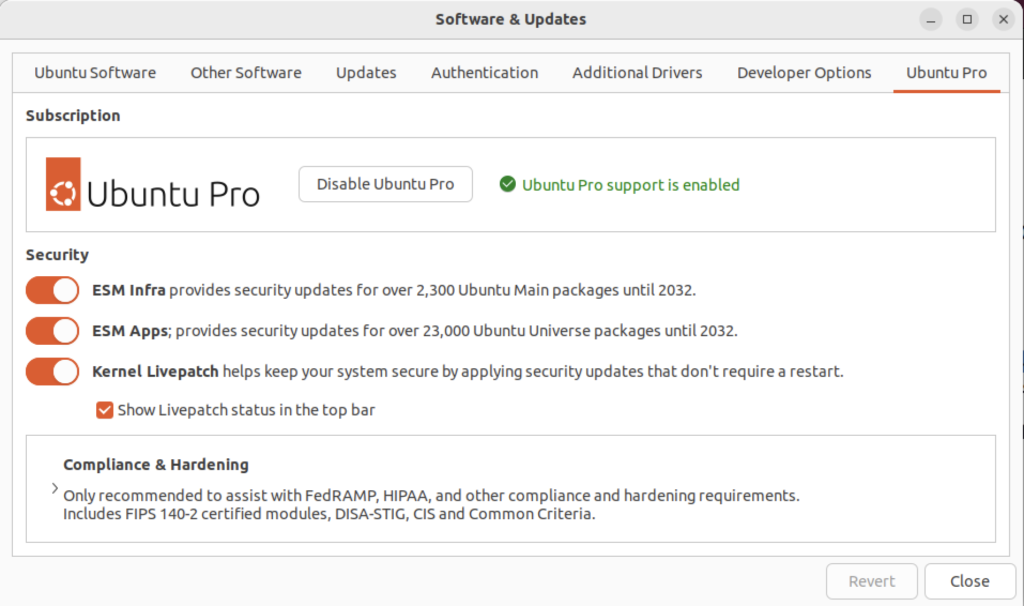

Ubuntu Pro Upgrade?

There wasn’t a choice when I chose to update the Ubuntu instance. I was compelled to upgrade to Ubuntu Pro. According to the upgrade I have five free installations. You can read more about Ubuntu Pro on this web page, and find their pricing schedule on this page.

MongoDB on Ubuntu

This post shows how to install, configure, and use MongoDB with JavaScript programs. You need to complete each section in the order provided (based on Cherry Server post).

Step #1: MongoDB Installation

Install the prerequisite packages with the following command:

sudo apt install -y software-properties-common gnupg apt-transport-https ca-certificates |

Display detailed console log →

Reading package lists... Done Building dependency tree... Done Reading state information... Done ca-certificates is already the newest version (20230311ubuntu0.22.04.1). ca-certificates set to manually installed. gnupg is already the newest version (2.2.27-3ubuntu2.1). gnupg set to manually installed. software-properties-common is already the newest version (0.99.22.9). apt-transport-https is already the newest version (2.4.11). 0 upgraded, 0 newly installed, 0 to remove and 22 not upgraded. |

Import the public key for MongoDB on your system using the curl command:

curl -fsSL https://pgp.mongodb.com/server-7.0.asc | sudo gpg -o /usr/share/keyrings/mongodb-server-7.0.gpg --dearmor |

Add MongoDB 7.0 APT repository to the /etc/apt/sources.list.d directory:

echo "deb [ arch=amd64,arm64 signed-by=/usr/share/keyrings/mongodb-server-7.0.gpg ] https://repo.mongodb.org/apt/ubuntu jammy/mongodb-org/7.0 multiverse" | sudo tee /etc/apt/sources.list.d/mongodb-org-7.0.list |

Reload the local package index, which refreshes the local repositories and makes Ubuntu aware of the newly added MongoDB repository:

sudo apt update |

Display detailed console log →

Hit:1 http://us.archive.ubuntu.com/ubuntu jammy InRelease Get:2 http://us.archive.ubuntu.com/ubuntu jammy-updates InRelease [119 kB] Get:3 http://security.ubuntu.com/ubuntu jammy-security InRelease [110 kB] Hit:4 https://dl.google.com/linux/chrome/deb stable InRelease Hit:5 https://download.vscodium.com/debs vscodium InRelease Hit:6 http://us.archive.ubuntu.com/ubuntu jammy-backports InRelease Hit:7 https://ftp.postgresql.org/pub/pgadmin/pgadmin4/apt/jammy pgadmin4 InRelease Ign:8 https://repo.mongodb.org/apt/ubuntu jammy/mongodb-org/7.0 InRelease Get:9 https://repo.mongodb.org/apt/ubuntu jammy/mongodb-org/7.0 Release [2,090 B] Get:10 https://repo.mongodb.org/apt/ubuntu jammy/mongodb-org/7.0 Release.gpg [866 B] Get:11 https://repo.mongodb.org/apt/ubuntu jammy/mongodb-org/7.0/multiverse arm64 Packages [27.6 kB] Get:12 https://repo.mongodb.org/apt/ubuntu jammy/mongodb-org/7.0/multiverse amd64 Packages [28.6 kB] Fetched 288 kB in 4s (72.8 kB/s) Reading package lists... Done Building dependency tree... Done Reading state information... Done 22 packages can be upgraded. Run 'apt list --upgradable' to see them. |

Install the mongodb-org meta-package:

sudo apt install -y mongodb-org |

Display detailed console log →

Reading package lists... Done Building dependency tree... Done Reading state information... Done The following additional packages will be installed: mongodb-database-tools mongodb-mongosh mongodb-org-database mongodb-org-database-tools-extra mongodb-org-mongos mongodb-org-server mongodb-org-shell mongodb-org-tools The following NEW packages will be installed: mongodb-database-tools mongodb-mongosh mongodb-org mongodb-org-database mongodb-org-database-tools-extra mongodb-org-mongos mongodb-org-server mongodb-org-shell mongodb-org-tools 0 upgraded, 9 newly installed, 0 to remove and 22 not upgraded. Need to get 163 MB of archives. After this operation, 537 MB of additional disk space will be used. Get:1 https://repo.mongodb.org/apt/ubuntu jammy/mongodb-org/7.0/multiverse amd64 mongodb-database-tools amd64 100.9.4 [51.9 MB] Get:2 https://repo.mongodb.org/apt/ubuntu jammy/mongodb-org/7.0/multiverse amd64 mongodb-mongosh amd64 2.1.5 [48.7 MB] Get:3 https://repo.mongodb.org/apt/ubuntu jammy/mongodb-org/7.0/multiverse amd64 mongodb-org-shell amd64 7.0.6 [2,986 B] Get:4 https://repo.mongodb.org/apt/ubuntu jammy/mongodb-org/7.0/multiverse amd64 mongodb-org-server amd64 7.0.6 [36.7 MB] Get:5 https://repo.mongodb.org/apt/ubuntu jammy/mongodb-org/7.0/multiverse amd64 mongodb-org-mongos amd64 7.0.6 [25.6 MB] Get:6 https://repo.mongodb.org/apt/ubuntu jammy/mongodb-org/7.0/multiverse amd64 mongodb-org-database-tools-extra amd64 7.0.6 [7,786 B] Get:7 https://repo.mongodb.org/apt/ubuntu jammy/mongodb-org/7.0/multiverse amd64 mongodb-org-database amd64 7.0.6 [3,422 B] Get:8 https://repo.mongodb.org/apt/ubuntu jammy/mongodb-org/7.0/multiverse amd64 mongodb-org-tools amd64 7.0.6 [2,770 B] Get:9 https://repo.mongodb.org/apt/ubuntu jammy/mongodb-org/7.0/multiverse amd64 mongodb-org amd64 7.0.6 [2,804 B] Fetched 163 MB in 8s (20.2 MB/s) Selecting previously unselected package mongodb-database-tools. (Reading database ... 250115 files and directories currently installed.) Preparing to unpack .../0-mongodb-database-tools_100.9.4_amd64.deb ... Unpacking mongodb-database-tools (100.9.4) ... Selecting previously unselected package mongodb-mongosh. Preparing to unpack .../1-mongodb-mongosh_2.1.5_amd64.deb ... Unpacking mongodb-mongosh (2.1.5) ... Selecting previously unselected package mongodb-org-shell. Preparing to unpack .../2-mongodb-org-shell_7.0.6_amd64.deb ... Unpacking mongodb-org-shell (7.0.6) ... Selecting previously unselected package mongodb-org-server. Preparing to unpack .../3-mongodb-org-server_7.0.6_amd64.deb ... Unpacking mongodb-org-server (7.0.6) ... Selecting previously unselected package mongodb-org-mongos. Preparing to unpack .../4-mongodb-org-mongos_7.0.6_amd64.deb ... Unpacking mongodb-org-mongos (7.0.6) ... Selecting previously unselected package mongodb-org-database-tools-extra. Preparing to unpack .../5-mongodb-org-database-tools-extra_7.0.6_amd64.deb ... Unpacking mongodb-org-database-tools-extra (7.0.6) ... Selecting previously unselected package mongodb-org-database. Preparing to unpack .../6-mongodb-org-database_7.0.6_amd64.deb ... Unpacking mongodb-org-database (7.0.6) ... Selecting previously unselected package mongodb-org-tools. Preparing to unpack .../7-mongodb-org-tools_7.0.6_amd64.deb ... Unpacking mongodb-org-tools (7.0.6) ... Selecting previously unselected package mongodb-org. Preparing to unpack .../8-mongodb-org_7.0.6_amd64.deb ... Unpacking mongodb-org (7.0.6) ... Setting up mongodb-mongosh (2.1.5) ... Setting up mongodb-org-server (7.0.6) ... Adding system user `mongodb' (UID 132) ... Adding new user `mongodb' (UID 132) with group `nogroup' ... Not creating home directory `/home/mongodb'. Adding group `mongodb' (GID 140) ... Done. Adding user `mongodb' to group `mongodb' ... Adding user mongodb to group mongodb Done. Setting up mongodb-org-shell (7.0.6) ... Setting up mongodb-database-tools (100.9.4) ... Setting up mongodb-org-mongos (7.0.6) ... Setting up mongodb-org-database-tools-extra (7.0.6) ... Setting up mongodb-org-database (7.0.6) ... Setting up mongodb-org-tools (7.0.6) ... Setting up mongodb-org (7.0.6) ... Processing triggers for man-db (2.10.2-1) ... |

Verify the installed version of MongoDB with this command:

mongod --version |

It should display:

db version v7.0.6

Build Info: {

"version": "7.0.6",

"gitVersion": "66cdc1f28172cb33ff68263050d73d4ade73b9a4",

"openSSLVersion": "OpenSSL 3.0.2 15 Mar 2022",

"modules": [],

"allocator": "tcmalloc",

"environment": {

"distmod": "ubuntu2204",

"distarch": "x86_64",

"target_arch": "x86_64"

}

} |

Step #2: Start MongoDB Service & Shell

You can verify that the installed mongodb is disabled after initial installation with this command:

sudo systemctl status mongod |

It should display:

○ mongod.service - MongoDB Database Server

Loaded: loaded (/lib/systemd/system/mongod.service; disabled; vendor preset: enabled)

Active: inactive (dead)

Docs: https://docs.mongodb.org/manual |

Exit the output display from the systemctl utility by typing the escape key, a colon (:) and a q in sequence.

You can start the MongoDB service with this command:

sudo systemctl start mongod |

Then, check the MongoDB service:

sudo systemctl status mongod |

It displays:

● mongod.service - MongoDB Database Server

Loaded: loaded (/lib/systemd/system/mongod.service; disabled; vendor preset: enabled)

Active: active (running) since Thu 2024-03-07 16:38:17 MST; 2s ago

Docs: https://docs.mongodb.org/manual

Main PID: 33795 (mongod)

Memory: 79.2M

CPU: 706ms

CGroup: /system.slice/mongod.service

└─33795 /usr/bin/mongod --config /etc/mongod.conf

Mar 07 16:38:17 student-virtual-machine systemd[1]: Started MongoDB Database Server.

Mar 07 16:38:17 student-virtual-machine mongod[33795]: {"t":{"$date":"2024-03-07T23:38:17.642Z"},"s"> |

You can confirm that the database is up and running by checking if the server is listening on its default port, which is port 27017. Run the ss command to check the port number.

sudo ss -pnltu | grep 27017 |

It will display:

tcp LISTEN 0 4096 127.0.0.1:27017 0.0.0.0:* users:(("mongod",pid=33795,fd=14)) |

You can enable the mongodb service at startup with the following command:

sudo systemctl enable mongod |

It raised the following error:

Created symlink /etc/systemd/system/multi-user.target.wants/mongod.service → /lib/systemd/system/mongod.service. |

Now, start the MongoDB Shell (mongosh) by typing either the explicit or implicit MongoDB Shell command. The explicit one uses the port and database path, which are unnecessary when you’ve successfully started the mongosh service. (Please note that at the time of writing this blog post there is erroneous, or obsolete, content on the MongoDB Documentation Enable Access Control web page.

Explicit connection:

mongosh --port 27017 --db /var/lib/mongodb --help |

This version of the command will display most of the options available in MongoDB but it will suppress warning messages.

$ mongosh [options] [db address] [file names (ending in .js or .mongodb)] Options: -h, --help Show this usage information -f, --file [arg] Load the specified mongosh script --host [arg] Server to connect to --port [arg] Port to connect to --build-info Show build information --version Show version information --quiet Silence output from the shell during the connection process --shell Run the shell after executing files --nodb Don't connect to mongod on startup - no 'db address' [arg] expected --norc Will not run the '.mongoshrc.js' file on start up --eval [arg] Evaluate javascript --json[=canonical|relaxed] Print result of --eval as Extended JSON, including errors --retryWrites[=true|false] Automatically retry write operations upon transient network errors (Default: true) Authentication Options: -u, --username [arg] Username for authentication -p, --password [arg] Password for authentication --authenticationDatabase [arg] User source (defaults to dbname) --authenticationMechanism [arg] Authentication mechanism --awsIamSessionToken [arg] AWS IAM Temporary Session Token ID --gssapiServiceName [arg] Service name to use when authenticating using GSSAPI/Kerberos --sspiHostnameCanonicalization [arg] Specify the SSPI hostname canonicalization (none or forward, available on Windows) --sspiRealmOverride [arg] Specify the SSPI server realm (available on Windows) TLS Options: --tls Use TLS for all connections --tlsCertificateKeyFile [arg] PEM certificate/key file for TLS --tlsCertificateKeyFilePassword [arg] Password for key in PEM file for TLS --tlsCAFile [arg] Certificate Authority file for TLS --tlsAllowInvalidHostnames Allow connections to servers with non-matching hostnames --tlsAllowInvalidCertificates Allow connections to servers with invalid certificates --tlsCertificateSelector [arg] TLS Certificate in system store (Windows and macOS only) --tlsCRLFile [arg] Specifies the .pem file that contains the Certificate Revocation List --tlsDisabledProtocols [arg] Comma separated list of TLS protocols to disable [TLS1_0,TLS1_1,TLS1_2] --tlsUseSystemCA Load the operating system trusted certificate list --tlsFIPSMode Enable the system TLS library's FIPS mode API version options: --apiVersion [arg] Specifies the API version to connect with --apiStrict Use strict API version mode --apiDeprecationErrors Fail deprecated commands for the specified API version FLE Options: --awsAccessKeyId [arg] AWS Access Key for FLE Amazon KMS --awsSecretAccessKey [arg] AWS Secret Key for FLE Amazon KMS --awsSessionToken [arg] Optional AWS Session Token ID --keyVaultNamespace [arg] database.collection to store encrypted FLE parameters --kmsURL [arg] Test parameter to override the URL of the KMS endpoint DB Address Examples: foo Foo database on local machine 192.168.0.5/foo Foo database on 192.168.0.5 machine 192.168.0.5:9999/foo Foo database on 192.168.0.5 machine on port 9999 mongodb://192.168.0.5:9999/foo Connection string URI can also be used File Names: A list of files to run. Files must end in .js and will exit after unless --shell is specified. Examples: Start mongosh using 'ships' database on specified connection string: $ mongosh mongodb://192.168.0.5:9999/ships For more information on usage: https://docs.mongodb.com/mongodb-shell. |

Implicit connection:

mongosh |

You should see the following message with any warning messages:

Current Mongosh Log ID: 65ea502a97f4c1e2b7e12af4 Connecting to: mongodb://127.0.0.1:27017/?directConnection=true&serverSelectionTimeoutMS=2000&appName=mongosh+2.1.5 Using MongoDB: 7.0.6 Using Mongosh: 2.1.5 For mongosh info see: https://docs.mongodb.com/mongodb-shell/ To help improve our products, anonymous usage data is collected and sent to MongoDB periodically (https://www.mongodb.com/legal/privacy-policy). You can opt-out by running the disableTelemetry() command. ------ The server generated these startup warnings when booting 2024-03-07T16:38:17.818-07:00: Using the XFS filesystem is strongly recommended with the WiredTiger storage engine. See http://dochub.mongodb.org/core/prodnotes-filesystem 2024-03-07T16:38:18.350-07:00: Access control is not enabled for the database. Read and write access to data and configuration is unrestricted 2024-03-07T16:38:18.350-07:00: vm.max_map_count is too low ------ |

You can run opt out of the data collection by running the disableTelemetry() command from the Linux command line. Use the following command (a broader explanation is in the MongoDB Telemetry documentation):

mongosh --nodb --eval "disableTelemetry()" |

It should return:

Current Mongosh Log ID: 65eab2df3e663bde3711fa2f Using Mongosh: 2.1.5 For mongosh info see: https://docs.mongodb.com/mongodb-shell/ Telemetry is now disabled. |

You still have three warning messages to deal with at this point. You should fix the vm.max_map_count warning first. This is a Linux kernel issue. You can determine the current value of the vm.max_map_count value with this command:

cat /proc/sys/vm/max_map_count |

It should return the system default value:

65530 |

You can change it at runtime with this command:

sudo sysctl -w vm.max_map_count=262144 |

However, you must restart the mongod service to see the change in the mongosh shell. There won’t be a warning message for the kernel parameter value being too low until you reboot your operating system. You can restart your mongod service with this command:

sudo service mongod restart |

You can make a change to the /etc/sysctl.conf file to ensure the parameter is set to the correct value each time the system reboots. Simply add the following line as the root user or by using the sudo prefacing a text editor or your choice (like vim or nano) to your /etc/sysctl.conf file:

# Adding vm.max_map_count to sysctl.conf defaults. vm.max_map_count=262144 |

At this point, you’ve eliminated two of the warning messages. The next step shows you how to enable Access Control. If you want to check the general server status, run the following command from the Linux Command-Line Interface (CLI):

mongosh --eval "db.serverStatus()" > server_status.log |

You can inspect the log file, which should be slightly less than 2,000 lines of output with MongoDB a 7.0.6 installation. Using the command from the Linux CLI is generally the easiest way to inspect the output from the db.serverStatus() function, which is just too long to scroll from the console output.

Step #3: MongoDB Enabling Access Control

Connect to the mongosh …

Step #4: MongoDB Installing Node.js and React.js

Install Node.js with the following command:

sudo apt install -y nodejs |

Display detailed console log →

Reading package lists... Done Building dependency tree... Done Reading state information... Done The following additional packages will be installed: libc-ares2 libjs-highlight.js libnode72 nodejs-doc Suggested packages: npm The following NEW packages will be installed: libc-ares2 libjs-highlight.js libnode72 nodejs nodejs-doc 0 upgraded, 5 newly installed, 0 to remove and 23 not upgraded. Need to get 13.7 MB of archives. After this operation, 53.9 MB of additional disk space will be used. Do you want to continue? [Y/n] y Get:1 http://us.archive.ubuntu.com/ubuntu jammy/universe amd64 libjs-highlight.js all 9.18.5+dfsg1-1 [367 kB] Get:2 http://us.archive.ubuntu.com/ubuntu jammy-updates/main amd64 libc-ares2 amd64 1.18.1-1ubuntu0.22.04.3 [45.1 kB] Get:3 http://us.archive.ubuntu.com/ubuntu jammy-updates/universe amd64 libnode72 amd64 12.22.9~dfsg-1ubuntu3.4 [10.8 MB] Get:4 http://us.archive.ubuntu.com/ubuntu jammy-updates/universe amd64 nodejs-doc all 12.22.9~dfsg-1ubuntu3.4 [2,410 kB] Get:5 http://us.archive.ubuntu.com/ubuntu jammy-updates/universe amd64 nodejs amd64 12.22.9~dfsg-1ubuntu3.4 [122 kB] Fetched 13.7 MB in 3s (4,006 kB/s) Selecting previously unselected package libjs-highlight.js. (Reading database ... 250172 files and directories currently installed.) Preparing to unpack .../libjs-highlight.js_9.18.5+dfsg1-1_all.deb ... Unpacking libjs-highlight.js (9.18.5+dfsg1-1) ... Selecting previously unselected package libc-ares2:amd64. Preparing to unpack .../libc-ares2_1.18.1-1ubuntu0.22.04.3_amd64.deb ... Unpacking libc-ares2:amd64 (1.18.1-1ubuntu0.22.04.3) ... Selecting previously unselected package libnode72:amd64. Preparing to unpack .../libnode72_12.22.9~dfsg-1ubuntu3.4_amd64.deb ... Unpacking libnode72:amd64 (12.22.9~dfsg-1ubuntu3.4) ... Selecting previously unselected package nodejs-doc. Preparing to unpack .../nodejs-doc_12.22.9~dfsg-1ubuntu3.4_all.deb ... Unpacking nodejs-doc (12.22.9~dfsg-1ubuntu3.4) ... Selecting previously unselected package nodejs. Preparing to unpack .../nodejs_12.22.9~dfsg-1ubuntu3.4_amd64.deb ... Unpacking nodejs (12.22.9~dfsg-1ubuntu3.4) ... Setting up libc-ares2:amd64 (1.18.1-1ubuntu0.22.04.3) ... Setting up libnode72:amd64 (12.22.9~dfsg-1ubuntu3.4) ... Setting up libjs-highlight.js (9.18.5+dfsg1-1) ... Setting up nodejs (12.22.9~dfsg-1ubuntu3.4) ... update-alternatives: using /usr/bin/nodejs to provide /usr/bin/js (js) in auto m ode Setting up nodejs-doc (12.22.9~dfsg-1ubuntu3.4) ... Processing triggers for man-db (2.10.2-1) ... Processing triggers for libc-bin (2.35-0ubuntu3.6) ... |

You can check the Node.js version with this command:

node -v |

v12.22.9 |

Install the Node.js package manager npm with the following command:

sudo apt install -y npm |

Display detailed console log →